As organizations accelerate the integration of AI into daily operations, legal and compliance risks are becoming more pronounced. Many companies face a difficult balance between improving efficiency and safeguarding confidential and privileged information. Legal transcription services provide a controlled, compliance-focused alternative, yet some businesses continue to adopt automated tools without fully evaluating data security, accuracy, and regulatory implications. Legal departments increasingly recognize that tools designed to streamline workflows can also introduce liability exposure, particularly as AI capabilities evolve faster than governance frameworks and internal controls.

In this article, you’ll learn how:

- AI transcription tools can expose confidential legal communications, creating risks for attorney-client privilege and data security.

- AI-generated transcripts may contain hallucinations or inaccuracies that expose legal liability in sectors such as healthcare, law enforcement, and litigation.

- Automated captioning and transcription systems often fail to meet ADA accessibility standards, potentially exposing organizations to compliance violations. Organizations must carefully evaluate AI vendors’ data storage, retention, and cross-border processing practices to avoid privacy and regulatory risks.

AI Breaches the Sacred Attorney-Client Bond

One of the most significant legal risks associated with AI in legal practice is the potential erosion of the attorney-client privilege. Many law firms now rely on AI tools for routine administrative functions, including meeting transcription. While these systems can improve efficiency, they also introduce confidentiality and data governance concerns that require deliberate risk assessment.

The primary issue is how AI transcription providers collect, store, process, and distribute sensitive information. Automatically generated transcripts are often shared with meeting participants or stored in third-party cloud environments.

Without strict access controls, encryption standards, and clear data retention policies, confidential communications may be exposed to unauthorized individuals. The use of AI does not, by itself, waive the attorney-client privilege. However, privilege can be jeopardized if reasonable safeguards are not in place to protect the information. A widely discussed incident involved AI researcher Alex Bilzerian, who reported receiving an unintended full transcript of a meeting from Otter.ai. The transcript reportedly included private discussions that occurred after a venture capital firm’s Zoom meeting had formally concluded.

Bilzerian shared the incident publicly on X, where the post received millions of views. Although there was no indication that the information was maliciously exploited, the episode underscores how automated transcription workflows can create confidentiality risks when controls fail.

For legal professionals, the takeaway is not that AI transcription is inherently unsafe, but that governance, vendor due diligence, and secure handling protocols are essential to preserve privilege and maintain compliance obligations.

This is particularly important when comparing automated tools with specialized providers, such as court transcription services, which typically operate under stricter procedural safeguards, confidentiality standards, and chain-of-custody requirements designed for legal proceedings.

However, the implications of such breaches are worse than the immediate confidentiality concerns. Attorney-client privilege goes out the window when AI tools expose confidential details. And, in some cases, related discussions on the same matter. In addition, this kind of risk is acute in the litigation context—inadvertent disclosure of privileged information can impact litigation outcomes.

Other Implications Of Using AI In The Legal Landscape

Here are more things to watch out for when using AI in legal settings.

- AI in Jury Selection: AI systems that analyze jurors’ social media activity and background data may introduce bias into jury selection, potentially influencing decisions in ways that challenge the fairness of the process.

- Legal Fee Reshaping: As AI increasingly performs tasks traditionally handled by junior lawyers, law firms may need to adjust billing models and rethink how legal services are priced.

- Courtroom Decision Support: The use of AI tools to assist judges or courts with case analysis could raise concerns about accountability, transparency, and the extent of human oversight in legal decisions.

- Translation Challenges: AI-powered translation used in cross-border legal matters can pose risks if nuanced legal meanings or jurisdiction-specific terminology are misinterpreted.

- Digital Knowledge Banks: Law firms that develop internal AI-powered knowledge databases may improve efficiency, but heavy reliance on standardized information could unintentionally limit diverse legal reasoning.

- Smart Contract Evolution: Integrating AI with blockchain-based smart contracts may automate contract execution, but it also raises questions about governance, dispute resolution, and the role of human review.

- Expert Testimony Analysis: AI tools designed to analyze or fact-check expert testimony may help identify inconsistencies, though they often struggle to evaluate credibility, intent, and contextual factors that humans interpret more effectively.

Institutional Risks of AI Transcription and Generative Tools

Across several sectors, organizations are beginning to confront a common dilemma: while AI tools promise efficiency, they also introduce legal, ethical, and compliance risks that institutions may not be prepared to manage. Law enforcement agencies, healthcare providers, and universities have encountered situations in which the potential benefits of AI collide with requirements for accuracy, accountability, and privacy.

To understand the scope of the issue, it is helpful to examine how these risks appear in different institutional settings.

| Sector | Main Concern | Key Compliance Issue |

| Law Enforcement | AI-generated inaccuracies in police reports | CJIS security rules and potential Brady disclosure issues |

| Healthcare | AI transcription hallucinations in medical documentation | HIPAA privacy and medical malpractice exposure |

| Education | AI transcription tools capturing sensitive classroom discussions | FERPA compliance and recording consent laws |

Law Enforcement: Accuracy and Accountability

Law enforcement agencies face particular challenges when using AI in report writing. The King County Prosecuting Attorney’s Office recently implemented a policy rejecting police report narratives generated with AI assistance. The decision was tied to concerns about CJIS compliance, privacy, and the reliability of AI-generated information.

Under the updated rules, officers must certify their reports under penalty of perjury. Even minor AI-generated inaccuracies that escape review could later be exposed in court. Because many AI systems do not preserve intermediate drafts, officers may also struggle to demonstrate whether a mistake originated from the AI system or from their own oversight.

These risks extend beyond simple documentation errors. Inaccurate reports can compromise investigations, potentially contribute to wrongful arrests, and trigger Brady obligations if credibility issues arise during litigation.

Similar concerns apply to government transcription services, where transcripts of interviews, hearings, and official proceedings may become part of the public record. If AI-generated content introduces inaccuracies into these documents, it could undermine evidentiary reliability and complicate legal proceedings.

Healthcare: Hallucinations in Clinical Documentation

Healthcare was among the earliest sectors to adopt AI transcription tools, particularly for documenting patient interactions. However, concerns about accuracy have become a central issue.

Some studies analyzing Whisper-based transcription tools have reported hallucinations, where the system generates content that does not appear in the source audio. In certain cases, these hallucinations include entire sentences that were never spoken. Reported error rates vary between studies, but researchers have documented hallucinations in a substantial share of analyzed transcripts.

These errors are not limited to minor word substitutions. Researchers have identified examples that include invented medical treatments, statements inserted during periods of silence, and other fabricated content.

In a clinical context, inaccurate transcripts can affect patient records and treatment decisions. The legal consequences could include malpractice claims or regulatory scrutiny. This risk is especially significant in medicolegal transcription, where medical documentation may later be used in insurance disputes, disability evaluations, or court proceedings.

Errors or AI-generated hallucinations in these records could compromise the evidentiary reliability of the documentation. Compliance requirements add another layer of complexity. Healthcare providers must ensure that any AI system handling patient data complies with HIPAA privacy and security rules, yet many AI services do not explicitly guarantee HIPAA-compliant data handling.

Legal and Compliance Risks of AI in Accessibility and Data Security

AI tools such as transcription and captioning systems are increasingly used to improve accessibility and automate documentation. However, organizations adopting these technologies must consider the legal and compliance risks associated with accuracy, accessibility standards, and data protection. Regulatory requirements such as the Americans with Disabilities Act (ADA) and global data protection laws impose strict obligations that AI systems may not consistently meet.

Accessibility Compliance Risks (ADA)

The Americans with Disabilities Act (ADA) creates legal exposure for organizations that rely on inaccurate AI-generated captions or transcripts. Several universities, including Maryland, Harvard, and MIT, have previously faced lawsuits over inadequate captioning that allegedly violated accessibility requirements.

Although AI captioning technology has improved, accuracy still falls short of the standards expected for accessibility compliance. For example, automatic captioning systems may achieve around 70% accuracy in some cases, while general AI transcription systems often reach about 86% accuracy under favorable conditions.

Accessibility guidelines typically require captions to achieve approximately 99% accuracy. This gap creates liability risk for organizations that depend solely on automated captioning, especially because transcription accuracy can degrade significantly in the presence of background noise, strong accents, or complex terminology. Inaccurate captions may also prevent individuals with hearing impairments from fully participating in educational or professional activities, potentially leading to discrimination claims.

Data Security and Third-Party AI Access

AI software can introduce new security vulnerabilities when organizations allow external platforms to access internal communications or records. Many AI tools integrate directly with calendars, meetings, messaging systems, or organizational databases. Without strict controls, these integrations may expose confidential information or sensitive communications to third-party vendors. Organizations must carefully evaluate how AI providers access, store, and process internal data before adopting these systems.

Data Retention and AI Training Practices

Another concern is how AI providers retain and use the data they collect. Some platforms may store transcripts, recordings, or other information and potentially use that data for model training or system improvements. Organizations must verify that vendor data-handling practices align with their legal obligations and internal data retention policies. Failing to understand these practices can create risks related to unauthorized data exposure or improper reuse of sensitive information.

Cross-Border Data Protection Compliance

Many AI service providers operate globally, which adds complexity to regulatory compliance. Organizations may need to comply with multiple data protection laws simultaneously, such as the European Union’s General Data Protection Regulation (GDPR), the California Consumer Privacy Act (CCPA), and other regional privacy regulations.

Because AI systems often process data across distributed infrastructure, ensuring compliance with jurisdiction-specific rules can become difficult if data transfers occur without clear geographic controls.

Why Ditto’s Human Transcription Is Still The Gold Standard

I know I’ve said this before, but it bears repeating: the consequences of inaccurate transcription are heavy, far-reaching, and unpredictable. Some potential effects of incorrect transcripts include miscommunication, legal ramifications, loss of credibility, misinformation, operational errors, medical errors, negative financial consequences, damaged relationships, and wasted time and resources.

Ditto offers 100% human transcription—no AI, no automated tools, no soulless machines like ChatGPT listening to your recordings and spitting out inaccurate transcripts by the boatload.

We’re a professional transcription company, so we won’t settle for giving our clients the bare minimum. Our services come with the following features:

- 100% human transcription: Ditto’s human transcription guarantees the highest possible accuracy, from initial checks to final edits.

- U.S.-based Transcribers: We only work with native English speakers to ensure quality, comprehension, and accuracy.

- Certified Transcripts: Any transcripts involved in litigation can be certified—an extra layer of protection.

- No long-term contracts: We operate on a pay-as-you-go basis; give us as much or as little work as you need, without paying through the nose for quality transcription.

- Fast turnaround times: To ensure your workflow runs smoothly, you’ll get your transcripts in as little as 24 hours.

- Different legal transcription pricing options: We offer rush jobs or economical rates for longer turnaround times to match different budgets.

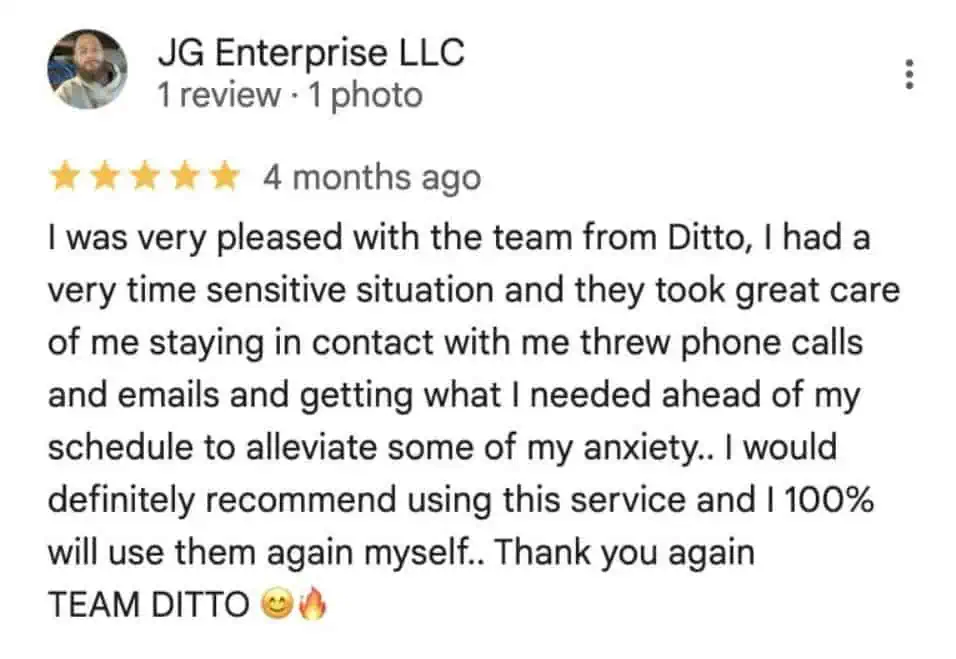

Still not convinced? Here’s what client testimonials say about our services:

Ditto Transcripts is a Denver, Colorado-based FINRA, HIPAA, and CJIS-compliant transcription services company that provides fast, accurate, and affordable transcripts for individuals and companies of all sizes. Call (720) 287-3710 today for a free quote.